SXSW AI Censorship: Trademark Bot Silenced Nonprofit Posts

SXSW's AI trademark bot mass-removed protest posts with no human review. A homelessness nonprofit lost its voice. No counter-notice. No recourse.

A music festival's AI trademark scanner flagged Instagram accounts for the crime of typing "SXSW" — then Instagram's own AI auto-deleted the posts. No human reviewed a single removal. A nonprofit fighting homelessness in Austin lost their voice; a coalition of 100+ protest events was silenced before it could reach anyone. Two automated systems talked to each other and legitimate political speech disappeared. This is 404 Media's beat — and they've been documenting exactly this feedback loop across platforms, universities, and now federal courts.

The AI Trademark Bot That Couldn't Tell Protest from Piracy

South by Southwest (SXSW) — Austin's massive annual music, film, and tech festival — hired BrandShield, an AI-powered trademark detection tool (software that automatically scans the internet for unauthorized use of a brand's name or logo), to police its brand online.

BrandShield didn't just catch counterfeit merchandise sellers. It mass-reported Instagram accounts for any mention of the text "SXSW" — regardless of context or intent.

Vocal Texas, a nonprofit organization working to end homelessness and poverty in the Austin area, had Instagram posts automatically removed. They hadn't used SXSW's logo. They hadn't created fake event branding. They'd simply typed "SXSW" — the name of the festival they were criticizing. That was enough for the AI to flag them and for Instagram's system to act.

More than 100 counter-events were coordinated under the banner "Smash By Smash West" to protest SXSW and its impact on Austin communities. Many of the organizing posts were removed by the same automated system that was supposed to catch fake merchandise.

The organizer known as Burnice described what actually happened behind the scenes: "All of that is actually just happening by robots talking to robots. It's an AI system that mass reports these accounts, and then probably an AI system at Instagram that just sorts through and approves or rejects."

Mathew Zuniga of Tiny Sounds Collective, another group affected, was more blunt: "I think it was more of a deliberate attempt to take down anti-South By Southwest rhetoric online."

Why Removed Content Never Comes Back

If you've ever had a YouTube video wrongly taken down for a copyrighted song, you know that the DMCA (Digital Millennium Copyright Act — the US federal law governing copyright online since 1998) gives you a counter-notice process. You file a dispute, a 10-14 day timer starts, and if the claimant doesn't sue you, your content is restored.

Trademark law has no such mechanism. There is no mandatory counter-notice requirement. There is no restoration timer. Once BrandShield files the complaint and Instagram's automated system approves it, the content stays down permanently — unless the company that filed the complaint decides, voluntarily, to withdraw it.

Cara Gagliano, an attorney at the EFF (Electronic Frontier Foundation — a nonprofit organization defending digital civil liberties and free speech since 1990), put the legal absurdity plainly: "You're allowed to use a company's name to talk about the company, right? How else are you going to do it?"

On why fighting back is nearly impossible: "When you have these takedowns... they don't have any incentive to retract the complaint, and so the content stays down."

- Copyright (DMCA): Mandatory counter-notice → 10-14 day window → content restored if no lawsuit filed

- Trademark: No counter-notice requirement → content permanently down → zero mandatory restoration path

- BrandShield's method: Keyword text matching (the word "SXSW"), not logo detection or counterfeit analysis

- Instagram's moderation: Fully automated approval — no human reviewer in the loop at any stage

BrandShield disputed 404 Media's characterizations but provided no detailed explanation of its false-positive rate or what recourse, if any, exists for wrongly flagged legitimate speech.

The Same AI Automation Pattern Is Now Flooding Federal Courts

The same dynamic — AI tools deployed at scale without safeguards, creating systemic problems that nobody planned for — is now appearing inside America's court system.

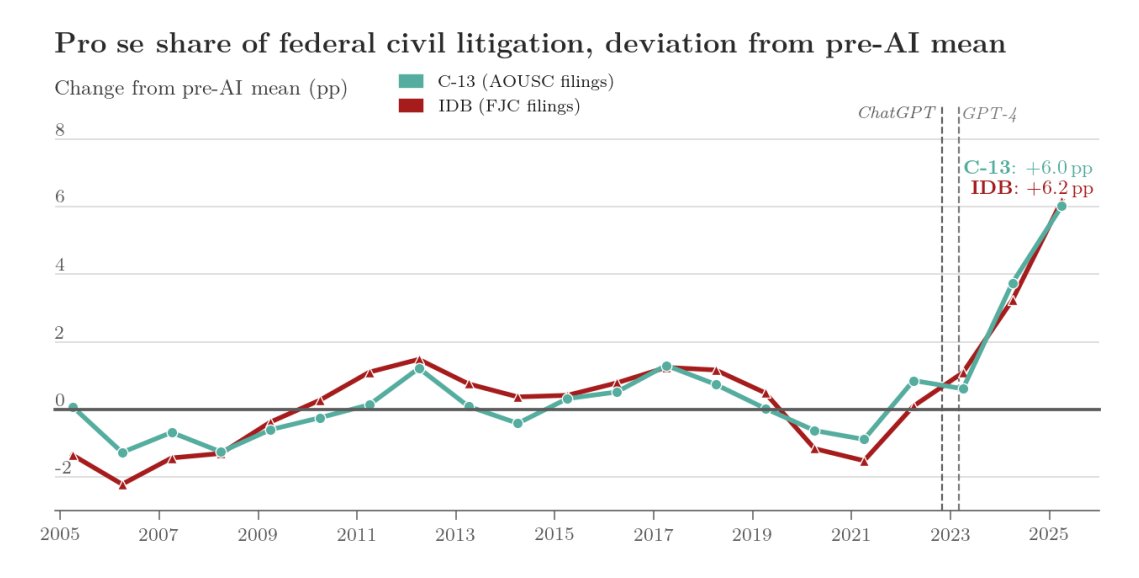

Researchers analyzed 4.5 million non-prisoner civil court cases spanning 20 years (2005-2025) and reviewed 46 million PACER entries (PACER is the Public Access to Court Electronic Records system — the federal government's database of court dockets used by lawyers and the public). Their central finding: pro se cases — civil cases where individuals represent themselves without a hired attorney — climbed from 11% in the 2005-2022 baseline period to 16.8% by 2025.

Researchers believe AI writing tools are enabling the shift. People who previously couldn't navigate dense legal language are now drafting complex federal complaints with AI assistance. By 2026, AI detection software flagged 18% of all court complaints as AI-written — up from essentially zero before 2023. The analysis used Pangram software (a tool that examines writing patterns and sentence structures to estimate the probability that a text was generated by AI) on a random sample of 1,600 complaints.

There's a genuine tension in this data. AI is democratizing access to the legal system for people who can't afford a $400-per-hour attorney. But self-represented cases are, as researchers put it, "heavier" — requiring more clarifying motions, more back-and-forth, and significantly more time from judges and court clerks. Courts built for a different era of filing volume are absorbing a surge they weren't designed for.

Researchers are careful to note the limits: the study is descriptive, identifying a correlation between the rise of AI writing tools and increased pro se filings, but not claiming a direct causal link to any specific product.

Universities Are Running the Same Playbook

Arizona State University launched Atomic — a platform that automatically converts faculty members' recorded lectures into AI-generated learning modules (structured educational content including summaries, quizzes, and interactive lessons, built without the professor doing additional work).

The professors found out after it was already running. Faculty described feeling "blindsided and angered" by the discovery that their recorded lectures had been spliced, transformed, and reused without their knowledge or consent. No opt-in process. No advance notification. No consultation before deployment.

404 Media tested Atomic's AI-generated output directly and found the modules "academically weak and even inaccurate" — a significant problem when the entire value proposition is educational quality at scale. ASU has not publicly addressed the accuracy findings.

The thread connecting BrandShield's SXSW deployment, ASU's Atomic rollout, and the AI court filing surge is identical: an institution deploys an automated system at scale, the people affected find out after the consequences are already in motion, and no meaningful recourse was built into the process before launch.

The Outlet Documenting It All — While Living It

Understanding how AI automation systems affect everyday life is harder when the journalism covering it gets suppressed. 404 Media became profitable within just 6 months of launching — a remarkable result for an independent newsroom in an era when most digital outlets are struggling. They're now partnering with Wired for broader content distribution.

The irony: 404 Media's own investigations into Meta throttling their reporting on drug advertisement networks have been subject to what they describe as platform auto-suppression — their posts get effectively "auto-killed" before reaching audiences. The publication best equipped to expose automation abuse is itself a target of automated throttling. Their staff — including Matthew Gault, Emanuel Maiberg, Sam Cole, Joseph Cox, and Jason Koebler — is mapping the pattern across industries.

If you work in content creation, nonprofit communications, academia, or law, their reporting is directly relevant to your work. Watch for the gap between "AI deployed" and "humans consulted" — it keeps widening. Subscribe via RSS at 404media.co/rss or read the full SXSW investigation directly. The next institution to quietly run an AI system on your content, your posts, or your legal filings may not tell you until it's already done.

Related Content — Get Started | Guides | More News

Sources

Stay updated on AI news

Simple explanations of the latest AI developments