OpenAI GPT-Realtime-2 Ends Voice AI Dead Air — 128K Context

OpenAI GPT-Realtime-2 fixes voice AI dead air: 128K context, 96.6% audio accuracy, 5 reasoning levels. Realtime API now generally available.

OpenAI shipped three realtime audio models on May 8, 2026 — and the headline isn't raw speed, it's the end of the dead-air problem that has blocked voice AI automation deployments for years. GPT-Realtime-2, the flagship model, narrates its own thinking while it works instead of going silent for several seconds mid-task while a caller waits on hold.

This matters because dead air kills voice AI in production. Call centers, travel booking apps, and medical intake lines have all stalled on voice AI pilots for one reason: the system goes quiet, and the caller hangs up. GPT-Realtime-2 is the first model built specifically to prevent that — and it exits beta today as a generally available product.

The Dead-Air Problem — Why Voice AI Keeps Failing in the Real World

Every voice AI demo looks smooth. Production breaks it in seconds: a caller asks to rebook a flight with a specific seat on a different airline. The AI needs to check availability, apply a promo code, and verify the traveler profile — three separate requests that take 4–8 seconds. Previous models went silent. Users assumed the call dropped.

GPT-Realtime-2 solves this with preamble phrases — short spoken filler lines ("Let me check that for you," "Give me just a moment while I pull that up") that fire automatically while the model handles parallel tool calls (making multiple requests to external services at the same time). Developers don't write these phrases manually; the model generates contextually appropriate ones based on what it's doing at that moment.

That's paired with interruption recovery: if a caller cuts in mid-sentence, GPT-Realtime-2 adjusts its response without losing its place in the multi-step task. Predecessor models frequently stalled or restarted entirely when interrupted mid-flow.

GPT-Realtime-2: 128K Context, Five Reasoning Levels, and Adaptive Tone Control

The most structurally important upgrade in GPT-Realtime-2 is the context window (how much conversation the model can hold in memory at once): it now supports 128K tokens (a token is roughly a word or word fragment), up from 32K in the prior version. That's a 4x expansion. In practical terms: a 15-minute call with hundreds of back-and-forth exchanges no longer causes the model to "forget" what the caller said five minutes ago.

The second major addition is adjustable reasoning effort — five levels developers can set per request:

- Minimal / Low — fast, cheap, designed for simple FAQ lookups or account balance checks

- Medium — standard for most customer support interactions

- High / xhigh — full compute for complex multi-step tasks: insurance claims, international travel bookings, medical intake forms

The default is "low," keeping latency and cost down for most queries. A developer building a billing bot sets low reasoning for "what's my balance?" and xhigh for "dispute this charge, apply my loyalty credit, and email me a confirmation." This per-request tuning is the quiet cost-management win buried inside this release.

GPT-Realtime-2 also adds tone control: the model speaks calmly during complex problem-solving, shifts to an empathetic register when it detects caller frustration, and turns upbeat after a successful transaction. It also supports industry-specific vocabulary including healthcare terminology, proper noun recognition for brand names, and specialty terms across verticals.

OpenAI Realtime API: Three Voice AI Models for Three Use Cases

GPT-Realtime-2 — full-stack voice AI agent

The primary model for building conversational AI applications. Handles multi-step reasoning, parallel tool execution, tone control, and interruption recovery. Uses per-token pricing: $32 per 1M audio input tokens, with cached tokens at just $0.40 per 1M — a significant discount for repeated context. Output costs $64 per 1M audio output tokens. Three session types are available: voice-agent, translation, and transcription modes.

GPT-Realtime-Translate — live AI translation across 70+ languages

A purpose-built translation model that converts spoken audio from 70+ input languages into 13 output languages in real time. It is a dedicated translation pipe — it does not hold conversational context across turns and cannot call external functions. Use cases include live event interpreting, multilingual customer support, and real-time meeting captions. Priced at $0.034 per minute: a 30-minute interpreted meeting costs roughly $1.02 — well below human interpreter rates.

GPT-Realtime-Whisper — streaming speech-to-text with tunable latency

OpenAI's original Whisper model (the speech-to-text system trained on 680,000 hours of multilingual audio) worked in batch mode — you submitted a completed audio file and received a transcript after processing. GPT-Realtime-Whisper streams text as words are spoken, with a developer-controlled latency tradeoff: lower latency produces earlier text with more partial words; higher latency yields cleaner transcripts with a slight delay. Priced at $0.017 per minute — exactly half the cost of GPT-Realtime-Translate.

GPT-Realtime-2 Benchmark Results: Audio Reasoning Accuracy vs. GPT-Realtime-1.5

OpenAI published direct comparisons between GPT-Realtime-2 and GPT-Realtime-1.5, the model it replaces:

- Big Bench Audio (a standardized test for multi-step audio reasoning tasks): 96.6% vs 81.4% for GPT-Realtime-1.5 — a +15.2 percentage point improvement, or 18.7% relative gain

- Audio MultiChallenge (a benchmark measuring how accurately a model follows complex spoken instructions): 48.5% at xhigh reasoning vs 34.7% for GPT-Realtime-1.5 — a +13.8 point jump representing a 39.8% relative improvement

- Context window: 128K tokens vs 32K tokens — 4x larger

The instruction-following gap is the most practically significant number here. A 39.8% relative improvement means far fewer "I'm sorry, could you repeat that?" failures on complex spoken requests — exactly the failure mode that has blocked production voice deployments across industries for the past two years.

Realtime API Pricing and Voice AI Automation ROI Breakdown

Here's the complete pricing structure across all three models:

- GPT-Realtime-2 input: $32 / 1M audio input tokens | $0.40 / 1M cached input tokens

- GPT-Realtime-2 output: $64 / 1M audio output tokens

- GPT-Realtime-Translate: $0.034 / minute

- GPT-Realtime-Whisper: $0.017 / minute

A quick ROI check: a customer support agent handles roughly 30 calls per day at 5 minutes each — 150 minutes of talk time. At $0.034/min for translation or the equivalent token rate for full voice agent conversations, daily API costs per agent run in the low single-digit dollar range, well below the $25–40/hour cost of a human agent. The five reasoning levels let teams optimize further by running minimal compute on simple queries while reserving xhigh for cases that actually need it.

The Realtime API officially exits beta with this release, becoming generally available (GA) — meaning it now carries OpenAI's standard SLA (service-level agreement, a formal uptime and reliability guarantee) for production deployments. Two new voices — Cedar and Marin — are available exclusively with this launch. Enterprise teams that have been waiting for GA status before investing engineering resources now have their green light.

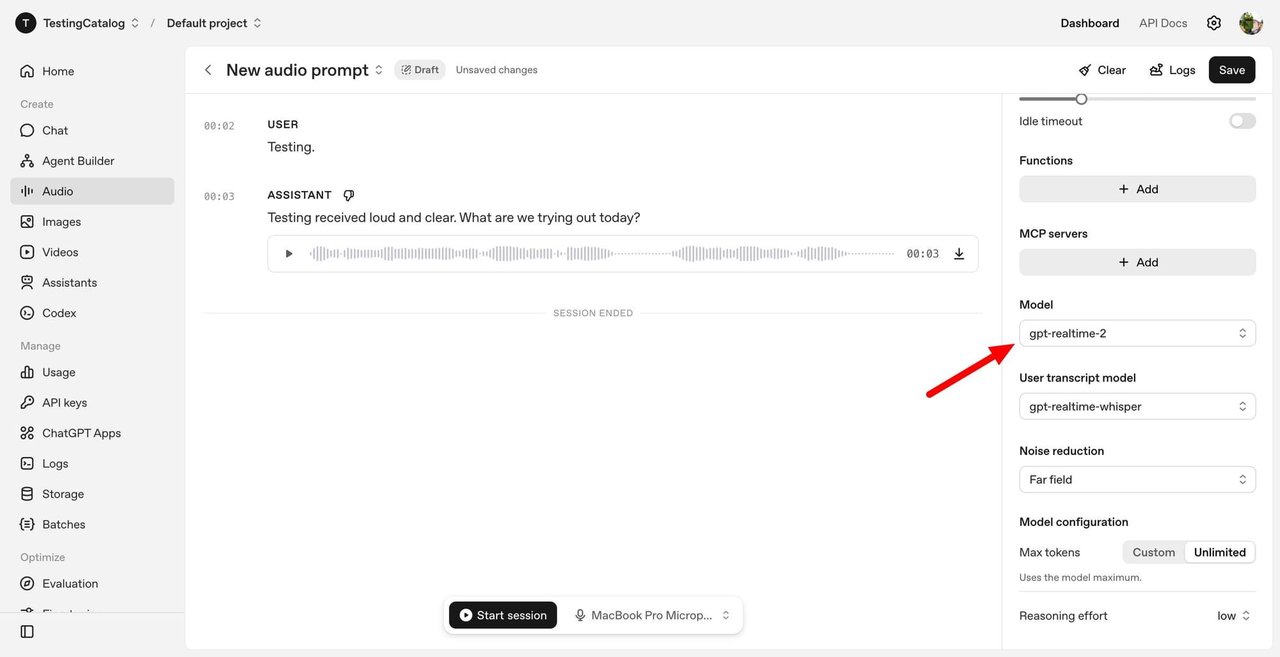

You can test GPT-Realtime-2 right now in the OpenAI Playground — select the realtime model, choose a reasoning level, and speak into your browser. No extra infrastructure needed. For a step-by-step guide to integrating voice AI automation into your own customer support or transcription workflows, see the AI Automation Guides — particularly the voice agent and multilingual support sections.

Related Content — Get Started | Guides | More News

Sources

Stay updated on AI news

Simple explanations of the latest AI developments