AI Security Audit: Claude Mythos Finds 423 Firefox Bugs

Claude Mythos found 423 Firefox security flaws in one month — 15x normal. AI auditing caught bugs hidden for 20 years that human reviewers missed.

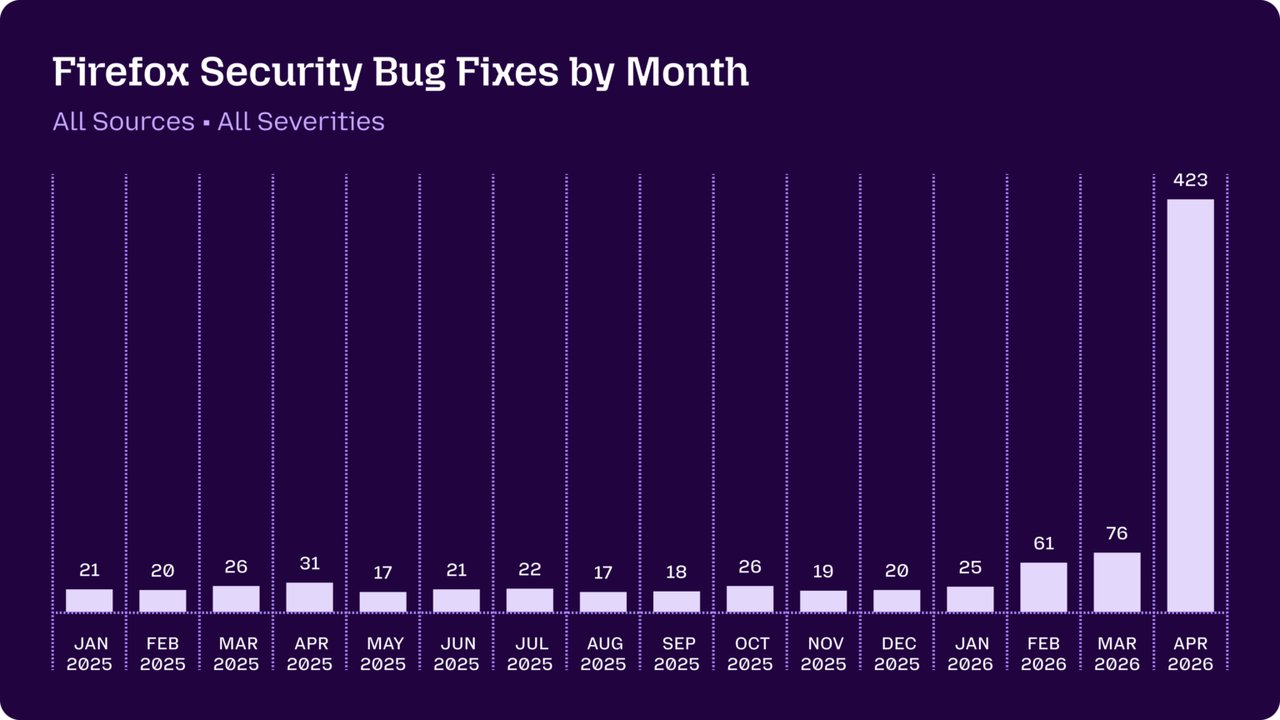

Just a few months ago, Mozilla's own security team described AI-generated bug reports as "unwanted slop." Then they deployed Claude Mythos — and fixed 423 security vulnerabilities in Firefox in a single month. That number is 15 to 20 times the monthly average that held steady throughout all of 2025. The April 2026 surge didn't just produce more fixes; it revealed bugs that had survived for decades inside one of the world's most-audited open-source codebases.

One of those bugs had been sitting in Firefox's XSLT processor (a system that transforms XML documents — a data format used in everything from RSS feeds to enterprise software pipelines — into HTML or other formats) for 20 years. A second vulnerability inside the HTML <legend> element — the small text label inside a grouped form field — had gone unnoticed for 15 years. Neither was cosmetic. Both represented genuine attack surface that shipped to hundreds of millions of Firefox users across every release cycle since the mid-2000s.

Firefox Security Transformation: The Numbers Behind Mozilla's AI Audit

Firefox's baseline throughout 2025 was remarkably consistent: between 20 and 30 security bugs fixed per month. That's not a sign of neglect — Mozilla has one of the most experienced browser security teams in the industry. It reflects a hard ceiling on what human review can sustain at scale, across millions of lines of C++ code.

Claude Mythos (Anthropic's AI model specifically fine-tuned for security code analysis) changed that ceiling in a single deployment cycle. April 2026 recorded 423 confirmed, patched vulnerabilities. Not speculative AI reports. Not hallucinated findings that wasted engineer time. Reproducible flaws that Mozilla's team verified and shipped as fixes.

- Pre-Mythos baseline (all of 2025): 20–30 security fixes per month

- April 2026 post-Mythos: 423 fixes in one month

- Throughput increase: 15–20x improvement

- Oldest bug caught: 20-year-old flaw in the XSLT processor

- Second oldest: 15-year-old flaw in the HTML

<legend>element

Mozilla's own statement captures just how unexpected the shift was: "It is difficult to overstate how much this dynamic changed for us over a few short months."

Why AI Bug Reports Were "Slop" — and Why They Stopped Being Slop

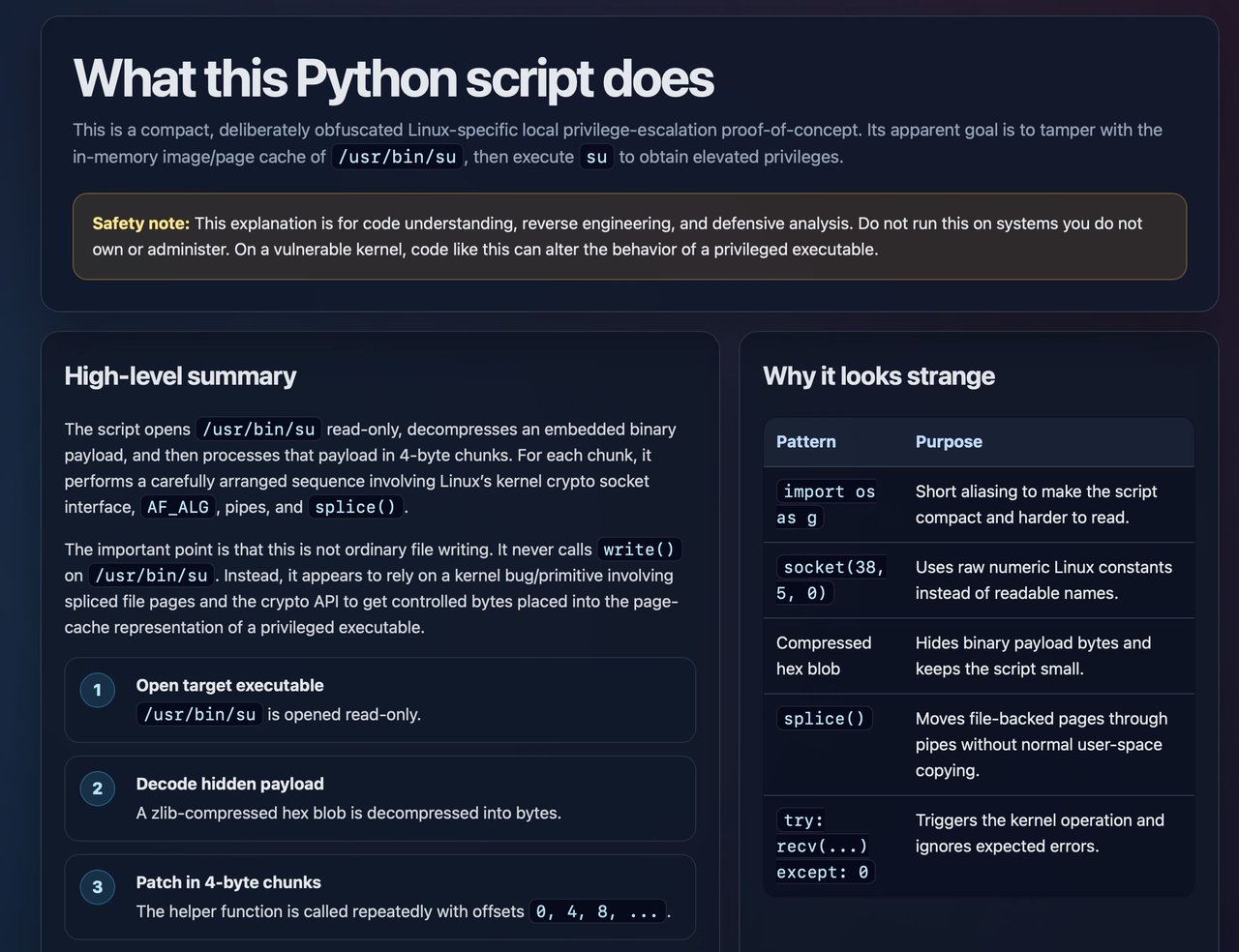

Through 2024 and most of 2025, the open-source security community had a well-documented problem with AI-generated vulnerability reports. Automated tools produced high volumes of speculative findings — technically plausible on the surface, but almost always wrong when engineers actually tested them. The false-positive rate was so severe that many maintainers instituted explicit policies against accepting AI-generated bug reports entirely.

Mozilla shared this frustration. Their security team wrote bluntly: "Just a few months ago, AI-generated security bug reports to open source projects were mostly known for being unwanted slop."

The reversal with Claude Mythos wasn't purely about model capability — it was about structured output. Mozilla's deployment used a custom harness (a repeatable automation pipeline that feeds targeted code sections to the AI and collects formal, structured results). Instead of asking the model to "find bugs," the harness required Claude Mythos to produce findings in the same format human security researchers use: precise reproduction steps, affected component identification, severity classification, and exploitability assessment. That specificity is what collapsed the false-positive rate and made reports actionable rather than dismissible.

The 20-Year Bug: What Claude Mythos AI Security Auditing Found in Legacy Code

The XSLT processor finding deserves attention on its own terms. XSLT (Extensible Stylesheet Language Transformations) is a decades-old programming language used to convert XML data into other formats — the transformation engine behind RSS readers, document converters, and enterprise data pipelines. Firefox ships its own XSLT engine, and that engine contained an exploitable flaw dating back to approximately 2006.

Over those 20 years, Firefox received thousands of security patches. The XSLT processor was reviewed, tested, and shipped in hundreds of browser releases across Windows, macOS, and Linux. The flaw wasn't buried in an obscure corner — XSLT parsing fires whenever a browser encounters XML with a stylesheet reference, a common web operation. Hundreds of contributors looked at this code. None caught it.

Claude Mythos flagged it within its first audit cycle.

The same pattern holds for the 15-year-old <legend> vulnerability. This is not a story about careless engineers — Mozilla's team is exceptionally skilled. It's a story about the mathematical limit of human review at scale. When a codebase spans millions of lines, the probability that any reviewer examines a specific function with specific edge-case inputs under specific conditions approaches zero over any realistic review cadence. AI auditing changes that probability at a structural level.

How Firefox Defense-in-Depth Absorbed What Claude Mythos Flagged

Not every vulnerability Claude Mythos identified was exploitable end-to-end. Firefox's defense-in-depth architecture — multiple independent security layers (sandboxing, memory isolation, content security policies) that an attacker must bypass sequentially to achieve real exploitation — blocked many of the attack chains Mythos attempted to trace during the audits.

That's worth noting for two reasons. First, it confirms Firefox's broader security architecture is functioning correctly — the layers are catching what slips through individual component analysis. Second, it means the same tool applied to a less-hardened codebase could produce results far more alarming than the Firefox report. Fewer safety nets means more of what Claude Mythos finds becomes directly exploitable.

For security teams evaluating AI-powered code auditing: Firefox may represent a best-case scenario, not a typical one.

How to Apply AI Security Auditing Without a Custom Harness

Mozilla's deployment required a custom harness built around Claude Mythos. But the underlying principle — structured, formal output instead of free-form suggestions — is available to any team today using off-the-shelf Claude tooling.

Thariq Shihipar, an engineer on the Claude Code team at Anthropic, demonstrated a lightweight version for pull request review:

"Help me review this PR by creating an HTML artifact that describes it… Render the actual diff with inline margin annotations, color-code findings by severity."

The core pattern from both Mozilla's industrial-scale harness and Shihipar's PR review prompt is identical: require the AI to produce actionable, structured findings rather than general observations. Three elements that made Mozilla's results reproducible:

- Targeted input: Feed bounded code sections, not entire repositories at once — the model's attention stays focused on exploitable patterns

- Structured output: Require formal bug report format with reproduction steps and severity classification — this is what eliminated the false-positive noise

- Human verification gate: Every AI finding goes through engineer review before patching — the AI generates the lead, the human confirms it before any code changes

If your team ships software with any legacy C/C++ components, browser-adjacent code, or modules that haven't seen a focused security audit in several years, the Firefox benchmark suggests those are worth a structured AI pass before the next release. You can explore practical starting points at AI Automation Guides — the structured code review templates there apply the same output-format principles Mozilla used at scale.

Related Content — Get Started | Guides | More News

Sources

Stay updated on AI news

Simple explanations of the latest AI developments