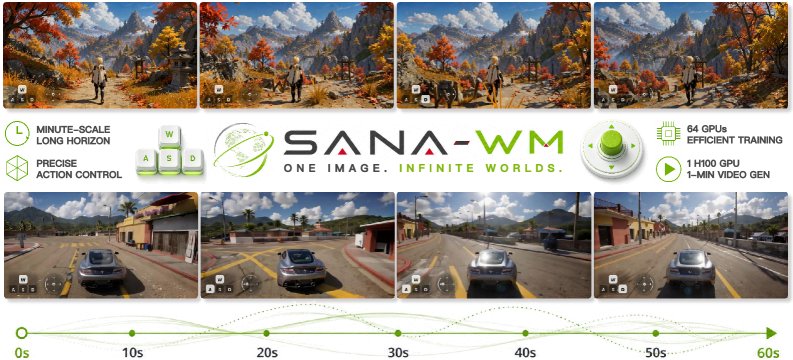

NVIDIA SANA-WM: 720p AI Video on One GPU, 36× Faster

NVIDIA's open-source SANA-WM generates 60-sec 720p AI video on a single GPU — 36× faster than rivals. Free on GitHub. Try it today.

NVIDIA just shipped SANA-WM, an open-source AI video generation world model (a system that generates realistic video from a single image and a set of motion instructions) that produces 60-second, 720p video on a single consumer GPU. Competitors like LingBot-World need 8 GPUs and still deliver lower-resolution 480p output at 40× slower speeds. For anyone working in robotics simulation, game development, or VFX, that gap is the entire budget conversation.

Why Every AI Video Generation Model Hit a Wall

Generating long videos with AI isn't just about model size — it's about memory. Standard attention mechanisms (the process that allows an AI to "focus" on relevant parts of an input while processing it) scale quadratically with sequence length. Double the frames and you need 4× the memory. At 720p, a 60-second video compresses down to 961 latent frames (compressed internal representations the model actually processes — smaller than raw video but enormous in bulk). Standard attention at that scale requires memory no single GPU can provide.

Every major competitor responded the same way: throw more GPUs at it. LingBot-World requires 8 GPUs combined and still only produces 480p output at 0.6 videos per hour. NVIDIA's research team decided to solve the architecture problem instead.

The SANA-WM Architecture That Changes the Equation

SANA-WM replaces most of its expensive attention operations with a Gated DeltaNet (GDN) — a recurrent layer (one that processes data step-by-step with a fixed memory footprint, like reading a book one page at a time rather than scanning all pages simultaneously). Memory cost stays constant regardless of how long the video is. A 10-second clip and a 60-second clip require identical memory to generate.

The backbone uses 20 transformer blocks (the fundamental building units of modern AI models like GPT-4 and Claude). Fifteen of those use the new GDN mechanism; just five use traditional softmax attention — preserving the ability to capture long-range dependencies (connections between things that appear far apart in a sequence) while eliminating the quadratic memory explosion that kills all-attention architectures at scale.

Beyond the attention redesign, the team built custom Triton kernels (low-level GPU code written in NVIDIA's Triton language that interfaces directly with hardware registers for maximum throughput) for the GDN operations, delivering a further 1.5–2× speed gain during training. One critical stabilization technique — algebraic key-scaling (dividing attention keys by √(D·S) to keep numerical values in a stable range) — eliminates the NaN divergence crashes (numerical overflow failures that abort training runs entirely) that plagued early long-sequence experiments.

The full model trained for approximately 15 days on 64 H100 GPUs across four progressive stages. The result: a 2.6B-parameter model that fits inference on a single RTX 5090.

36× Faster — The Full Scorecard

The efficiency gap over competitors isn't incremental — it's structural. Here is the direct comparison across all active world models:

- SANA-WM (2.6B params): 720p output · 1 GPU required · 24.1 videos/hour

- LingBot-World (14B + 14B params): 480p output · 8 GPUs required · 0.6 videos/hour

- HY-WorldPlay (8B params): 480p output · 8 GPUs required · 1.1 videos/hour

- Matrix-Game 3.0 (5B params): 720p output · 8 GPUs required · 3.1 videos/hour

SANA-WM produces 36× more videos per hour than LingBot-World while using roughly 1/10th the model size (2.6B vs 28B total parameters). Visual quality scores 80.62 on VBench (the standard video generation benchmark where higher is better, with 100 as maximum) — within 1.2 points of LingBot-World's 81.82, a gap that is imperceptible in most real-world applications.

The distilled variant (a compressed version trained to replicate the full model's outputs with fewer compute steps) generates a full 60-second 720p clip in just 34 seconds on a single RTX 5090 using NVFP4 quantization (a compression technique that reduces internal number precision from 32-bit to 4-bit, dramatically cutting memory use with minimal visual quality loss). Full two-stage pipeline memory: 74.7 GB — engineered to fit within an H100's 80 GB budget.

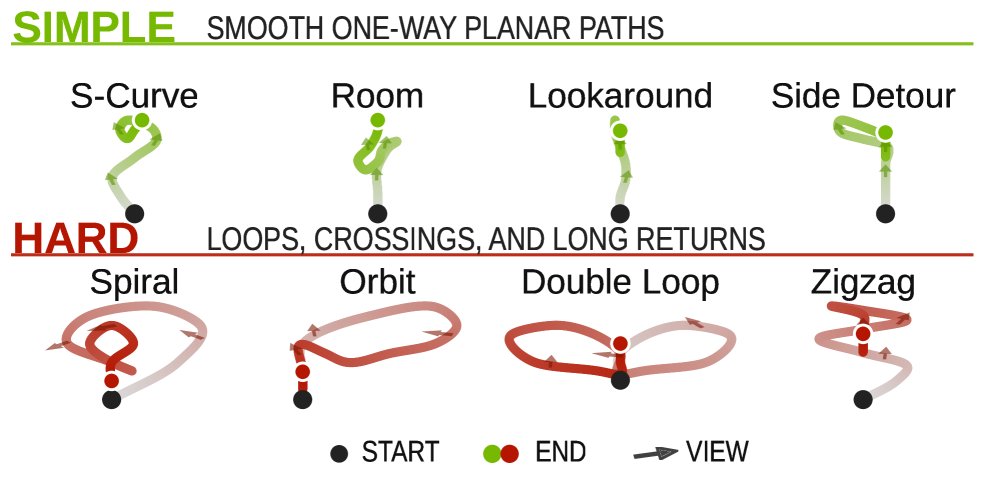

Precise Camera Control Built In

SANA-WM doesn't just generate video — it gives you 6-DoF camera control (six degrees of freedom: move forward/backward, left/right, up/down, plus pitch/yaw/roll — the same axis control a physical film camera offers). This matters enormously for robotics and simulation work, where training environments need footage from precisely defined camera angles to teach agents how to navigate real spaces.

The system uses a dual-branch architecture:

- UCPE coarse branch: defines the overall camera trajectory — the broad path the camera follows across the full 60-second clip

- Plücker fine branch: handles per-stride micro-corrections — the subtle intra-segment adjustments that make camera motion look physically realistic

On hard scenarios (complex, non-linear camera paths), rotation error comes in at 8.34° and translation error at 1.39 — competitive with models using far greater compute budgets. The annotation pipeline that produced training data processed 212,975 video clips across 7 datasets, generating per-frame metric-scale pose annotations (exact real-world distance measurements, not just relative depth estimates) using Pi3X depth estimation fused with MoGe-2 for accurate per-frame calibration.

Two-Stage Refinement

Stage 1 generates the base 60-second sequence. Stage 2 refines it using a component initialized from the 17B LTX-2 model with rank-384 LoRA adapters (LoRA, or Low-Rank Adaptation, is a technique for fine-tuning AI models by adding a tiny set of additional parameters instead of retraining the whole model — like adding a specialist overlay on top of a general foundation). Stage 1 alone runs in 51.1 GB of GPU memory, making it viable on hardware slightly older than the H100.

Try NVIDIA SANA-WM on Your Own GPU Today

SANA-WM is fully open-source on NVlabs/Sana on GitHub. The complete technical paper is on arXiv. You need a single RTX 5090 for the distilled variant, or 8× H100s for the full two-stage pipeline.

# Clone NVIDIA Sana repository

git clone https://github.com/NVlabs/Sana.git

cd Sana

# Install dependencies (requires CUDA-compatible GPU)

pip install -r requirements.txt

# Run distilled variant — generates 60s 720p video in ~34 seconds

python inference.py \

--model sana-wm-distilled \

--input_image your_frame.jpg \

--quantization nvfp4

# Full camera control with 6-DoF trajectory file

python inference.py \

--model sana-wm-720p \

--input_image your_frame.jpg \

--camera_trajectory trajectory.jsonIf you have been waiting for generative video that does not require a server rack, you can test this today. The efficiency gap between SANA-WM and its closest competitor is 36× — large enough to restructure entire production budgets. Browse the guides on AI automation workflows to see how video generation models like this fit into real pipelines.

Related Content — Get Started | Guides | More News

Stay updated on AI news

Simple explanations of the latest AI developments