This AI solves puzzles GPT and Claude can't — at 97% accuracy

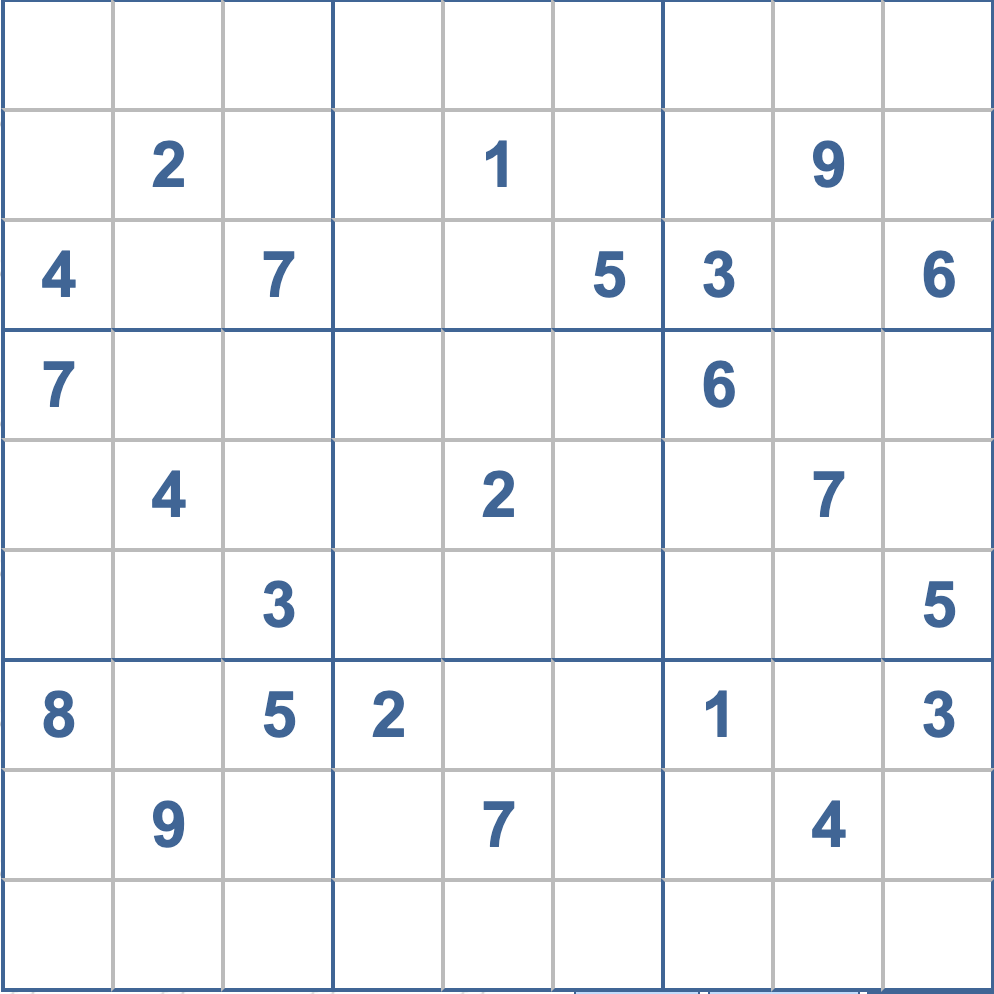

Pathway's BDH architecture scores 97.4% on extreme Sudoku puzzles while GPT, Claude, and DeepSeek score near 0%. A post-transformer approach that reasons without words.

A company called Pathway just published results for a new AI architecture that crushes a reasoning test every major AI model fails. Their model, called BDH (Baby Dragon Hatchling), scored 97.4% accuracy on roughly 250,000 extremely difficult Sudoku puzzles — the kind that require deep logical reasoning and backtracking.

For comparison: OpenAI's O3-mini, DeepSeek R1, and Claude 3.7 all scored approximately 0% on the same test. Not low — essentially zero.

Why Sudoku breaks today's AI

Sudoku might seem like a simple newspaper puzzle, but extreme-difficulty Sudoku is actually one of the hardest tests for AI reasoning. It requires holding multiple possibilities in your head simultaneously, checking rules across rows, columns, and boxes, and backtracking when you hit a dead end.

Today's large language models (LLMs) — including ChatGPT, Claude, and Gemini — think by generating one word at a time. They can't truly "hold" multiple options and compare them. It's like trying to solve a maze by only looking one step ahead, without ever being able to see the full picture.

That's why even the most powerful AI models with "chain-of-thought" reasoning (a technique where the AI writes out its thinking step by step) still fail at these puzzles. The problem isn't intelligence — it's architecture.

How BDH thinks differently

BDH doesn't generate text to reason. Instead, it works in what researchers call a "latent reasoning space" — think of it as an internal scratchpad where the AI can juggle thousands of possibilities at once without writing anything down.

Transformer vs. BDH — the key difference

Transformer (GPT, Claude, Gemini): Reasons by writing tokens, one at a time. Limited to ~1,000 internal values per step. Like solving a puzzle while narrating every thought out loud.

BDH: Reasons in an expanded internal space. Can hold multiple solution candidates simultaneously and make intuitive leaps. Like a human who "sees" the answer before articulating it.

This design also makes BDH 10x cheaper to run than chain-of-thought models, because it doesn't need to generate hundreds of reasoning tokens to reach an answer.

It learns from every interaction — in 20 minutes

Perhaps the most striking feature: BDH learns continuously. When you give it a new type of problem, it reaches "advanced beginner" level within 20 minutes and keeps improving with each attempt. Current LLMs are frozen after training — they can't learn from your conversations.

Pathway calls this "continual learning," and it's one of the biggest unsolved problems in AI. If BDH has genuinely cracked it, the implications go far beyond Sudoku.

Why this matters beyond puzzles

Sudoku is a proxy for real-world constraint satisfaction — the same type of reasoning needed for:

- Medical protocols — checking drug interactions across multiple conditions simultaneously

- Legal reasoning — ensuring a contract satisfies all regulatory requirements at once

- Supply chain planning — optimizing schedules with hundreds of constraints

- Strategic planning — evaluating multiple scenarios before committing to a path

If an AI can reliably handle constraint satisfaction, it opens doors for automation in fields where today's LLMs are too unreliable.

Pathway: not just a research lab

Pathway isn't a newcomer. Their open-source data processing framework has over 60,000 GitHub stars and is used for building real-time data pipelines and RAG systems (tools that let AI answer questions using your own documents). The company has been featured in the Wall Street Journal and Forbes.

BDH is their research bet that the transformer architecture — the foundation of every major AI model since 2017 — has hit its ceiling for certain types of reasoning. Their research paper details the full architecture.

The bigger picture: is the transformer era ending?

BDH isn't the only challenge to transformers. Mamba-3 recently showed transformers can be matched with half the memory. State-space models are gaining traction. And now BDH demonstrates that for constraint-heavy reasoning, transformers may be fundamentally limited.

None of these will replace ChatGPT for writing emails tomorrow. But they signal that the next generation of AI won't just be "bigger GPT" — it'll be architecturally different.

For anyone building AI products or making decisions about which AI to adopt: keep watching this space. The models that power your tools in 2027 may look nothing like today's.

Related Content — Get Started with Easy Claude Code | Free Learning Guides | More AI News

Sources

Stay updated on AI news

Simple explanations of the latest AI developments