Hachette just killed a horror novel — AI wrote it

Hachette pulled horror novel Shy Girl after NYT evidence showed AI wrote it. The author blames her editor — but the book is gone.

One of the world's biggest publishers just pulled a book off shelves — not because of bad sales, but because readers suspected a machine wrote it.

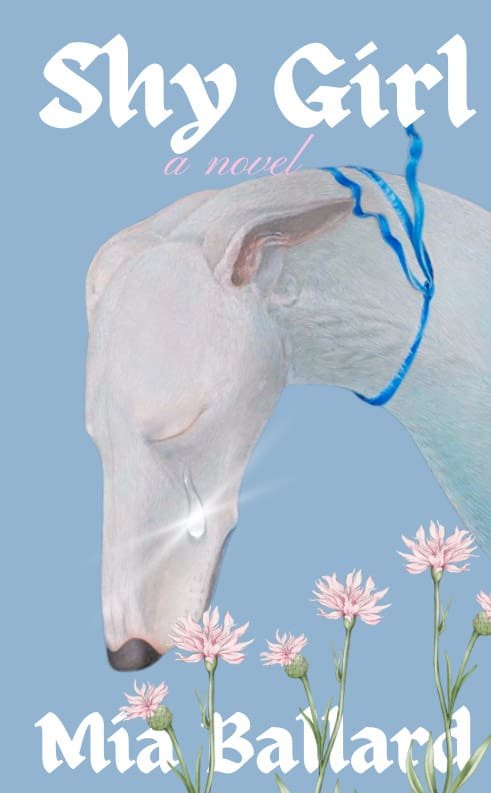

Hachette Book Group, one of the 'Big Five' publishers, cancelled the U.S. release of horror novel Shy Girl by Mia Ballard and removed all existing copies from sale in the UK. The reason: mounting evidence that the book was written with the help of AI.

How readers caught it

The novel was originally self-published in February 2025 and quickly gained attention — racking up 4,907 ratings on Goodreads with a 3.51-star average. But as more people read it, red flags appeared. Reviewers flagged "generic and confusing metaphors" and "repetitive phrasing" that felt machine-generated.

One reviewer wrote simply: "Really bad. Pretty sure this was AI generated."

Then came the bombshell. In January 2026, a BookTuber named Frankie's Shelf posted a nearly 3-hour video dissecting the novel's language patterns. That video went viral — crossing 1 million views — and turned a quiet suspicion into a full-blown publishing controversy.

The New York Times steps in

On March 19, 2026, the New York Times approached Hachette with evidence suggesting AI involvement. Within 24 hours, the publisher pulled the plug:

Hachette stated it "remains committed to protecting original creative expression and storytelling" and requires all authors to disclose any AI use during the writing process.

The U.S. edition — set to be published through Hachette's Orbit imprint — was cancelled entirely. The UK edition, which had already sold 1,800 print copies since its November 2025 release, was discontinued and removed from Amazon and the publisher's website.

The author's defense — and why it's complicated

Ballard denies writing with AI. Her explanation: she hired an acquaintance to edit the self-published version, and that person used AI tools without her knowledge. She says she's pursuing legal action against the editor and can't share more details.

The controversy has also revealed a cover art problem. The original self-published cover used imagery from a painting called "Dreamer" by artist Whyn Lewis — allegedly without permission. Both the UK and U.S. editions later used new artwork, but the damage was done.

Why this is a turning point for publishing

This isn't the first time AI-generated content has caused problems. Amazon has been flooded with AI-written books. But Shy Girl is different — it's the first time a Big Five publisher has been caught with an AI-suspected title on their roster.

The incident raises uncomfortable questions:

- Can publishers detect AI writing? Hachette apparently couldn't until a YouTuber and the New York Times did the work for them.

- Who is responsible? If an author's editor uses AI, is the author still accountable?

- What happens to trust? Readers who bought and enjoyed the book are now questioning whether their emotional response was to a machine's output.

If you create content — pay attention

This case matters beyond publishing. Whether you're a freelance writer, a marketer creating copy, or a designer working with AI tools — the lesson is the same: disclosure is becoming non-negotiable. Major platforms and publishers are tightening AI-use policies, and the public is getting better at spotting AI-generated work.

For authors specifically: Hachette now requires explicit disclosure of AI use at every stage of the writing process. Other publishers are expected to follow.

Related Content — Get Started with Easy Claude Code | Free Learning Guides | More AI News

Stay updated on AI news

Simple explanations of the latest AI developments